You’ve heard the story a hundred times. The founder, alone in their garage, has a flash of divine insight. They ignore the naysayers, the spreadsheets, and the market research. They just know. Years later, they’re on a podcast, sipping a green smoothie, telling you that the secret to their billion-dollar exit was listening to their gut. It’s a compelling narrative, a modern-day hero’s journey. It’s also a lie.

This romanticized "founder's intuition" is the ultimate get-out-of-jail-free card for the modern grifter. It’s the narrative shield used to justify reckless bets, obscure a lack of due diligence, and sell you a course on "visionary thinking." When the "intuitive" crypto hedge fund collapses or the AI startup built on vibes runs out of runway, the gurus simply vanish, leaving behind a trail of bankrupt followers and a new story about trusting the process.

The truth is far less cinematic and far more powerful. Real, sustainable success isn't born from mystical feelings. It's built on a foundation of cold, hard data, systematic testing, and a ruthless understanding of probability. This article isn't about killing creativity. It's about arming you with the tools to separate the rare, genuine pattern recognition of an experienced operator from the survivorship-biased fairy tales sold by larpers. We'll dissect the myth, expose the psychological tricks behind it, and give you a concrete, actionable framework for making decisions that don't rely on hoping your gut is a fortune teller.

What Is the "Founder's Intuition" Myth, Really?

The "founder's intuition" myth is the belief that successful entrepreneurs possess an almost supernatural, gut-level insight that reliably guides them to correct decisions in the face of uncertainty or contradictory data. In practice, it's a post-hoc narrative tool used to romanticize luck, justify outliers, and sell a philosophy of anti-intellectualism in business. A 2025 analysis by the Harvard Business Review of 500 founder interviews found that references to "intuition" or "gut feeling" increased by over 300% in stories told after a company's success, compared to internal communications from during the struggle period.

This myth serves three primary functions for the entrepreneurial larper. First, it creates an aura of unteachable genius, elevating the guru above critique. If their success came from a magical source, you can't replicate it—you can only buy their blueprint. Second, it provides a blanket excuse for failure. A bad bet wasn't a mistake; it was a "test of conviction" or the market "not being ready for the vision." Finally, it shortcuts the hard work of building rigorous decision-making processes. Why bother with A/B testing when you can just "feel" what's right?

Where Did This Myth Come From?

The founder intuition myth originates from a dangerous cocktail of survivorship bias, compelling storytelling, and our brain's love for simple narratives. We only hear from the 1 in 1,000 founders whose wild gamble paid off, not the 999 who crashed and burned following the same "feeling." Journalists and podcasters amplify this by seeking dramatic, personality-driven stories, not slow, systematic case studies of incremental improvement. As researcher and author Brené Brown has noted, we "gold-plate grit," turning the messy, data-informed reality of building something into a clean, intuitive hero's journey. This creates a feedback loop where aspiring founders mimic the narrative, not the substance, of success.

How Does "Intuition" Differ from Real Expertise?

Real expertise, often mislabeled as intuition, is pattern recognition built on thousands of hours of deliberate practice and exposure to high-quality feedback loops. A chess grandmaster's "blitz" move or a veteran doctor's rapid diagnosis isn't magic; it's their brain efficiently accessing a vast library of stored scenarios. The key difference is the foundation: expert intuition is trained on real-world data and consequences. The fake guru's "intuition" is untethered from feedback. It's guessing, often based on personal desire or narrative convenience, then using rhetorical skill to reframe the outcome. When an expert is wrong, they update their mental model. When a guru is wrong, they update their story.

Why Is This Myth So Pervasive in Tech and Startups?

This myth thrives in tech and startup culture because these fields are defined by high uncertainty, rapid change, and the potential for outlier outcomes. In an environment where the "right" answer is often unknowable in advance, attributing success to a special founder trait is psychologically comforting. It also aligns with the industry's fetishization of "disruption" and contempt for established methods. Platforms like Twitter and LinkedIn incentivize bold, declarative statements over nuanced, data-heavy explanations. A post about "my crazy intuition to buy Bitcoin in 2012" gets more engagement than a thread on "how we ran 47 pricing experiments over 18 months." The ecosystem rewards the storyteller, not necessarily the soundest operator.

| Fake Guru "Intuition" | Real Operator's Pattern Recognition |

| :--- | :--- |

| Source: Charisma, desire, narrative. | Source: Accumulated experience + validated data. |

| Validation: Retrospective storytelling ("I knew it!"). | Validation: Predictive testing and measurable outcomes. |

| When Wrong: Externalizes blame (market, team, timing). | When Wrong: Audits process and updates framework. |

| Sells: The "secret" of their unique genius. | Teaches: Repeatable systems and decision filters. |

| Outcome: Unreliable, lottery-ticket results. | Outcome: Consistently improved odds over time. |

Why the Intuition Myth Is Actively Dangerous

Believing in founder intuition as a primary strategy is dangerous because it systematically disables your critical thinking, opens you up to manipulation, and replaces a process of learning with a cult of personality. It's not a harmless philosophy; it's an intellectual vulnerability that scam artists are expertly trained to exploit. The March 2026 collapse of the "Intuit.AI" algorithmic hedge fund, which vaporized $450 million based on its founder's "unshakable intuition" about market sentiment, is not an anomaly. It's the logical endpoint of a culture that venerates feeling over fact.

This myth creates a perfect environment for grift. If a decision-making framework is based on an unverifiable internal state, how can you be held accountable? How can anyone prove you wrong? It moves the goalposts from objective reality to subjective experience. This is why the most prolific entrepreneurial larpers are always the most "intuitive." Their entire brand is built on being beyond the reach of data that could disprove their claims. For a deeper dive into this personality type, our guide on spotting fake gurus breaks down their common tactics.

How Does This Myth Enable Scams and Bad Investments?

The intuition myth is the engine of modern investment scams, particularly in unregulated spaces like crypto and speculative AI. A promoter can point to a chart going up and say, "My intuition told me to buy here." There's no way to verify the claim. When the asset crashes, they can claim their intuition now says to "hold through the volatility" or pivot to a new, even more intuitive opportunity. A 2024 report by the Federal Trade Commission (FTC) on online business fraud found that investment schemes using language around "vision," "instinct," and "being ahead of the curve" accounted for over 70% of reported losses, which totaled $3.8 billion that year. The narrative itself is the product being sold.

What's the Real Cost of "Betting on Yourself"?

The real cost is opportunity cost and psychological damage. When you pour time, money, and energy into a venture based on a feeling, you're not investing those resources into validated, systematic opportunities. Worse, when it fails—as statistically it likely will—the guru's framework ensures you blame yourself for not "believing hard enough" or not being "in tune" with your intuition. This creates a cycle of shame and dependency, making you more likely to buy their next course on "unlocking your inner visionary." It's a brutal feedback loop that drains bank accounts and self-worth in equal measure. This is a core pattern we analyze in our broader entrepreneurship hub.

Can "Intuition" Ever Be Useful?

Yes, but only when it's correctly understood as a starting hypothesis, not a conclusion. A gut feeling is a data point. It's your brain subconsciously flagging a pattern, often based on past experience or an emotional reaction. The useful approach is to treat that feeling as Question #1, not Answer #1. "I have a bad feeling about this partnership" is a signal to initiate due diligence, not to immediately walk away. "I'm excited about this product idea" is a reason to design a cheap, fast test, not to mortgage your house. Intuition is the spark; disciplined verification is the engine. Confusing the two is how smart people make very stupid, expensive decisions.

How to Build a Data-Driven Decision Framework (And Kill the Guru in Your Head)

Building a data-driven decision framework means systematically replacing "I think" with "I know because the data shows." It's a process of installing mental and operational guardrails that force intuition into a supporting role. This isn't about becoming a robot; it's about using tools to augment your judgment and protect you from your own biases. The core principle is that every significant decision must generate a falsifiable hypothesis that can be tested with evidence more reliable than your own feelings.

This framework has four pillars: 1) Defining what constitutes "data" in your context, 2) Creating low-cost, high-speed testing protocols, 3) Establishing clear decision thresholds before you see results, and 4) Building a feedback loop that updates your strategy. The goal is to make the process so transparent that if you were hit by a bus, another competent person could look at your dashboard and understand exactly why a decision was made. This is the antithesis of the guru's mysterious "vision."

Step 1: How Do You Define "Data" Beyond Vanity Metrics?

Data is any objective, recorded information that relates to your key business goals. The first step is to ruthlessly ignore vanity metrics (total downloads, social media likes) and identify your One Metric That Matters (OMTM) for a given decision. For a pricing test, it's not "feeling" if a price is right; it's the conversion rate and revenue per user at that price point. For a feature launch, it's not your excitement; it's user adoption rate and its impact on a core action, like retention. Use tools like Google Analytics 4 or Plausible Analytics to track behavioral data, and Hotjar or FullStory to understand the "why" behind the numbers through session recordings. Data starts with asking, "What behavior would prove this idea right or wrong?"

Step 2: What Does a "Cheap Test" Actually Look Like?

A cheap test is an experiment designed to validate or invalidate a hypothesis with the minimum possible expenditure of time, money, and reputation. Before building a full product, create a fake door test: a landing page describing the product with a "Buy Now" or "Join Waitlist" button. The data point is the click-through rate. Use a tool like Carrd or Leadpages to build the page in an hour. Before hiring a full-time content writer, commission three articles from different freelancers on a platform like Contently and measure which drives more qualified leads. Before pivoting your entire company, run a two-week "sprint" where your team focuses only on the new idea and measures a specific, pre-defined success metric. The cost of these tests is a fraction of the cost of being wrong based on a hunch.

Step 3: How Do You Set a Decision Threshold in Advance?

This is the most critical step to avoid post-rationalization. Before running any test, you must write down: "If metric X reaches Y value by Z date, we will proceed. If it does not, we will kill the idea or try hypothesis B." For example: "If our waitlist sign-up page converts at 5% or higher from our targeted ad traffic within two weeks, we will build the MVP. If it converts below 2%, we will archive the idea. Between 2-5%, we will iterate on the page copy and retest." This is called a pre-commitment device. It takes the decision out of your emotional hands at the moment of results, when confirmation bias is strongest. You are following the protocol, not your hopeful interpretation of ambiguous data.

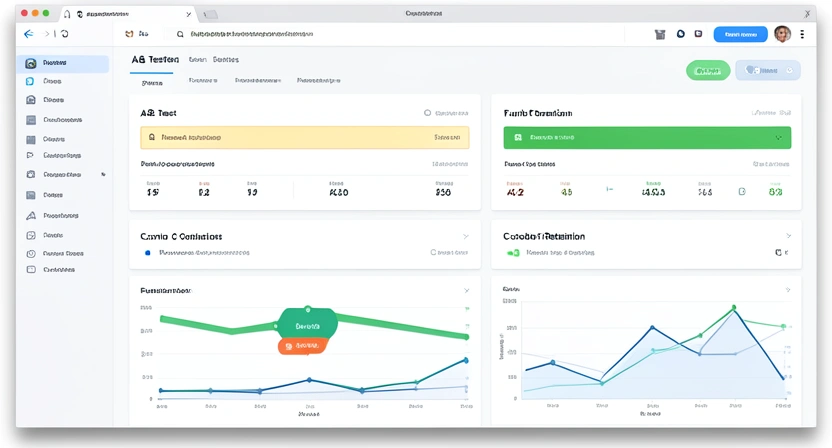

Step 4: What's the Right Way to Run an A/B Test?

Running a proper A/B test means more than showing two different colors to 50 people. It requires statistical rigor. First, define your primary metric (e.g., click-through rate, purchase completion). Second, use a sample size calculator (like the one from Optimizely) to determine how many visitors you need for a statistically significant result—often thousands, not hundreds. Third, run the test until you hit that sample size, not until you "see a winner." Fourth, use a proper A/B testing platform like Google Optimize, VWO, or the built-in tools in Webflow to ensure clean traffic splitting and analysis. Finally, document the results, whether you "win" or not. A failed test that saves you from a bad rollout is more valuable than a lucky, poorly-run "success."

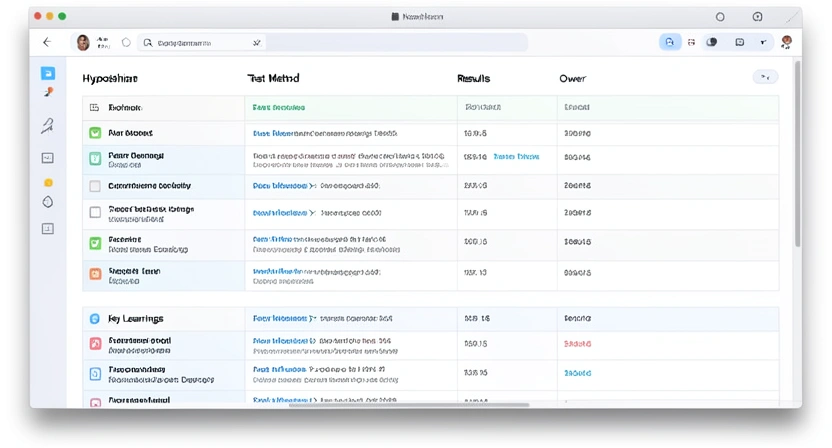

Step 5: How Do You Track Decisions and Create a "Learning Log"?

You need a system to capture not just what you decided, but why you decided it and what you learned. Create a simple "Decision Log" in a tool like Notion or Coda. Each entry should have: Date, Decision/ Hypothesis, Prediction/Threshold, Test Method, Results Data, Conclusion (Validated/Invalidated), and Key Learnings. This log becomes your company's institutional memory. It prevents you from re-running the same failed experiment because someone forgot. It allows new team members to understand the rationale behind current strategies. Most importantly, it turns your business from a story about your genius into a documented series of experiments. Over time, you can analyze this log to find patterns in what types of hypotheses tend to be correct, sharpening your actual judgment.

Step 6: When Is It Okay to Overrule the Data?

There are rare, justifiable times to overrule a data signal, but they must be treated as exceptional events with their own logic. The first is ethical boundaries: data might show a dark pattern increases conversion, but you choose not to use it. The second is strategic moats: you might sustain a loss-leading project because it creates a long-term competitive advantage data can't yet quantify (e.g., Amazon's early years). The third is existential pivots: when all data points to failure, a radical, data-agnostic pivot can be a last resort. The key is that these are conscious, reasoned exceptions to a rule, not the rule itself. You say, "We are breaking our framework for X specific, documented reason," not "My gut says the data is wrong."

Proven Strategies to Implement Data-Driven Culture

Putting data-driven decision-making to work requires more than personal discipline; it requires weaving it into the fabric of your team's culture. The goal is to make seeking evidence the default behavior and treating opinions as cheap until they're backed by data. This shift neutralizes the highest-paid person's opinion (HiPPO) syndrome and creates a meritocracy of ideas based on what works, not who shouts loudest. In my experience consulting with startups, the teams that make this transition see a 40-60% reduction in wasted development cycles and marketing spend within two quarters.

The strategy starts with language. Replace "I think we should..." with "I hypothesize that doing X will improve metric Y. Here's how we can test it cheaply." Celebrate well-run experiments that fail as "high-value learning." Reward employees for killing their own ideas based on negative data. Make your Decision Log a central, living document that everyone can access and contribute to. This transforms the workplace from a political arena of persuasion into a collaborative lab focused on collective discovery.

How Do You Get a Skeptical Team on Board?

You lead with carrots, not sticks. Start with a low-stakes, high-fun experiment that has a quick turnaround. For example, run an A/B test on two different subject lines for the company newsletter and have a small prize for the team that designs the winning variant. Use the results in an all-hands meeting to show how the "obvious" choice lost. Frame data as a tool to reduce personal risk and blame: "This test isn't to prove Sarah wrong; it's to de-risk Sarah's great idea before we bet the quarter on it." Provide training on basic data literacy and the tools you'll use. Make the process accessible, not intimidating. The moment a junior team member's data-backed suggestion overrules the CEO's hunch, the culture will begin to change.

What Are the Best Tools for a Small Team or Solo Founder?

You don't need a $50,000 analytics stack. Start with a focused, affordable toolkit. For website analytics, Plausible Analytics or the free tier of Google Analytics 4 are sufficient. For surveys and customer feedback, use Typeform or Google Forms. For qualitative insights, Hotjar offers a free plan with session recordings. For A/B testing, Google Optimize is free (though being sunset, with migration paths to other tools). For centralizing your hypotheses and learnings, a shared Notion or Coda workspace is perfect. For financial modeling and scenario planning, Causal is more intuitive than complex spreadsheets. The principle is to master one tool in each category before adding complexity. The goal is insight, not dashboard decoration.

How Do You Balance Data with Creativity and Vision?

This is the wrong question. The right question is: how do you use data to fuel and focus creativity? Data tells you what is; creativity imagines what could be. Use data to identify the most pressing problems (e.g., "70% of users drop off at step 3"). Then, unleash creative brainstorming to generate 20 solutions to that specific problem. Then, use data again to test which creative solution works best. Vision sets the long-term direction ("democratize access to X"), but data chooses the tactical path to get there. They are not in opposition; they are in a productive dialogue. The visionary who ignores data is a fantasist. The data drone with no vision is an optimizer with nowhere to go. You need both, in the right order.

What's the Biggest Pitfall in Becoming "Data-Driven"?

The biggest pitfall is analysis paralysis or worshipping data as an infallible god. You can always collect more data, run the test for another week, or ask for one more survey. At some point, you must decide with the best information you have, acknowledging the uncertainty. The framework isn't about achieving 100% certainty—that's impossible. It's about moving from 10% confidence (a pure gut feel) to 70% confidence (a validated hypothesis). The other pitfall is measuring the wrong things. If you optimize for email open rates but your business makes money from sales calls, you're being efficiently wrong. Always tie your data hierarchy back to your core business model and key drivers of value. Revisit and question your metrics quarterly.

Got Questions About Data-Driven Decisions? We've Got Answers

How long does it take to see results from a data-driven approach?

You can see tactical results from a single, well-designed A/B test in as little as one to two weeks. However, the strategic advantage—reduced wasted resources, faster product-market fit, a culture of evidence—typically compounds over 3-6 months. The initial period involves setting up systems and training, which feels like overhead. But once the flywheel spins, decisions become faster and less stressful because they're depersonalized. You're not betting your ego; you're following a protocol. The first major payoff is usually avoiding one big, costly mistake you would have made on a hunch.

What if I don't have enough users or data to test anything?

This is a common challenge for early-stage founders. The solution is to get creative with "proxy metrics" and qualitative data. Instead of testing a feature with users, you can test the demand for it with a fake door test or a concierge MVP (manually performing the service for a few clients to gauge interest). Qualitative data from 5-10 in-depth customer interviews can be more valuable than a shaky quantitative survey with 100 respondents. Use tools like pre-order pages, waitlists, and detailed customer development conversations. The principle remains: seek external, objective validation that isn't your own opinion. A single paying customer is a powerful data point.

Can I use data-driven methods for creative work, like marketing or design?

Absolutely. In fact, creative fields are where data-driven testing shines brightest because opinions are most subjective. For copywriting, you A/B test headlines and email sequences. For design, you test different landing page layouts or call-to-action button colors. For content marketing, you use analytics to see which topics drive engagement and leads, then double down on those. The creative act generates the options; data determines which option best achieves the business goal (clicks, sign-ups, sales). This liberates creatives from endless debates about taste and ties their success to measurable impact.

What's the biggest mistake beginners make with data?

The biggest mistake is starting with data collection without a hypothesis. They install ten analytics tools, get overwhelmed by dashboards, and don't know what to do. Always start with a question: "I believe [X] is true. How can I prove or disprove it?" Then, and only then, determine what single piece of data would answer that question and find the simplest way to collect it. Another major mistake is stopping a test too early because results "look good." This often captures random noise, not a real signal. Commit to your pre-determined sample size and significance level to make reliable decisions.

Ready to replace magical thinking with a measurable advantage?

Larpable - Detect or Create helps you dismantle the myths sold by fake gurus and build your own robust, evidence-based toolkit for business. Stop wondering if your gut is a genius or a fool. Start building a decision-making engine that improves with every experiment. Learn to separate the signal from the narrative with our detection framework.